KNOW YOUR WORLD. KNOW YOUR SELF.

UNPACK THE COMPLEXITY OF EVERYDAY LIFE

Search + Filter

TYPE

TOPIC

Featured

EDUCATION

DEMOCRACY

AI

WAR

YOUTH

IDENTITY

{{featureTitle}}

-

-

Explainer

Science + Technology

Machine without a ghost: The dangers of anthropomorphising AI

1 JUNE 26

-

Explainer

Society + Culture

A cage called freedom: Cultural diversity in contemporary literature

22 MAY 26

-

Opinion + Analysis

Business + Leadership

Can Australian business afford to ignore inequality?

18 MAY 26

-

Opinion + Analysis

Relationships

The Drama raises the thorny question of what it means to lie to those we love

15 MAY 26

-

-

-

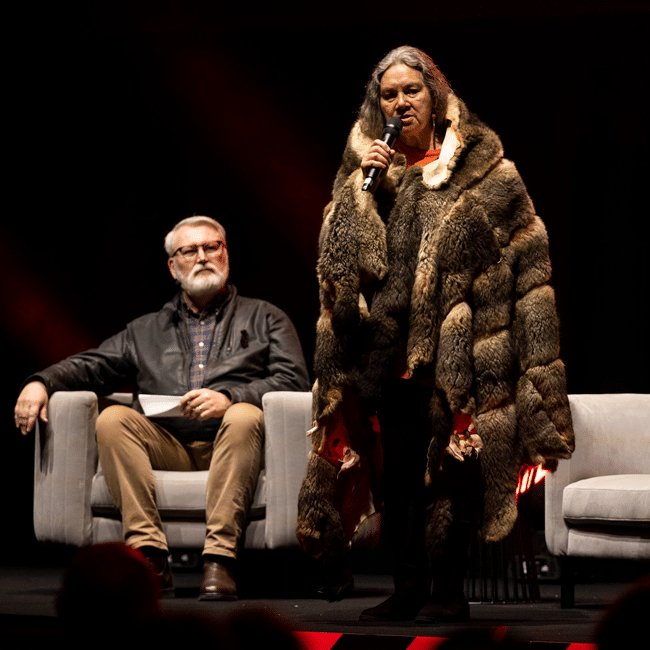

Explainer

Society + Culture

Welcome to Country comes from a place of deep, sincere respect

11 MAY 26

-

Explainer

Politics + Human Rights

Known, documented and ignored: Confronting the international politics of inaction

20 APRIL 26

-

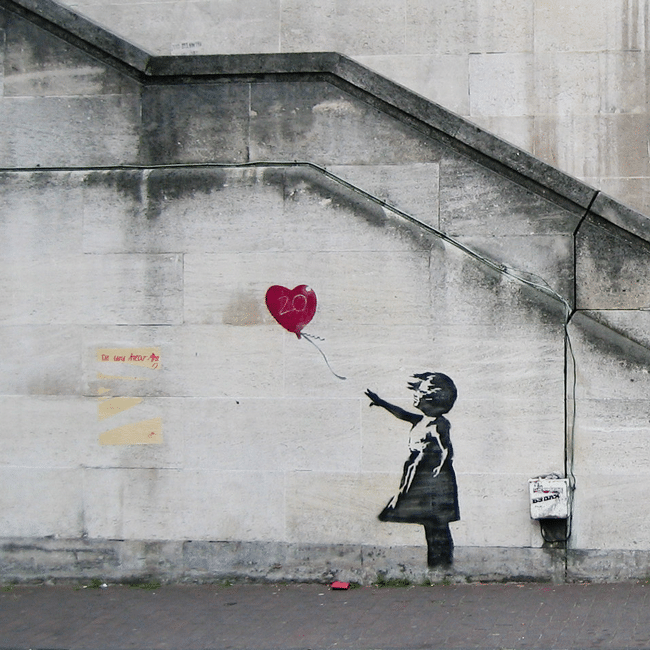

Explainer

Society + Culture

Behind the veil: When are we entitled to unmask the anonymous?

16 APRIL 26

-

-